People often fail to practice what they preach – patterns of behavior that are due to specific biological processes rather than just bad personality. According to a new study published in the journal cell reportpeople who behave dishonestly while criticizing the same behavior in others have reduced activity in certain areas of the brain. This research shows that aligning one’s behavior with one’s personal moral standards requires active mental integration.

Social harmony is highly dependent on people maintaining consistent ethical standards. When people act contrary to the very rules they use to judge others, they risk damaging their own reputations and social relationships. But this kind of hypocrisy happens all the time in everyday life, from small lies at work to major political scandals.

Most ethical choices involve fundamental trade-offs between personal benefit and doing the right thing. When people make decisions for themselves, they face a direct temptation to secure rewards. When you watch others make decisions, you don’t face the same temptations. This difference in perspective makes it easier to hold others to high standards.

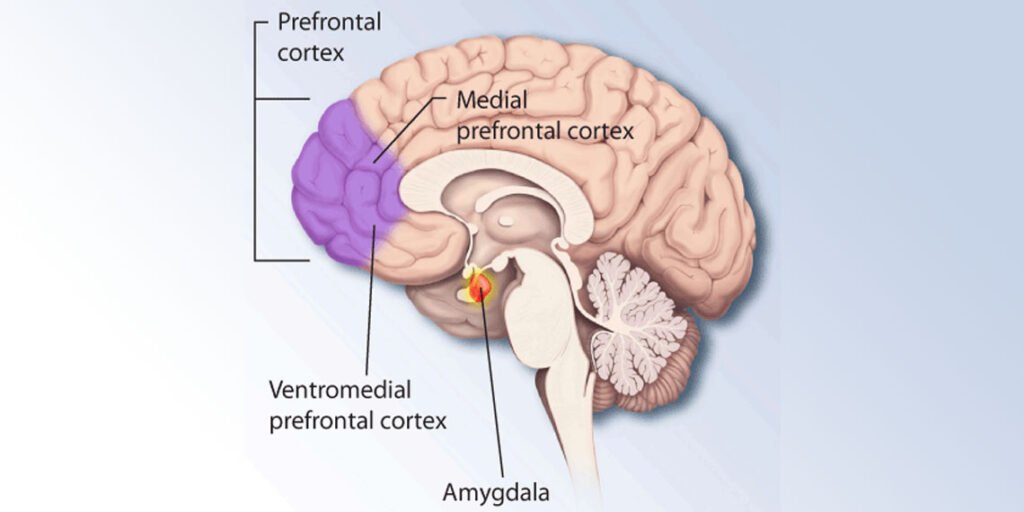

Valley Liu, a researcher at the University of Science and Technology of China, led a research team to find out why this disconnect occurs. “As neuroscience researchers, we wanted to understand why knowing the right thing doesn’t necessarily lead to doing it,” said co-author Xiaochu Zhang from the University of Science and Technology of China. They thought the answer might lie in a brain region called the ventromedial prefrontal cortex.

The ventromedial prefrontal cortex is located deep in the lower frontal lobe of the brain. It acts as an information hub for decision making. This helps individuals assess risks, weigh potential rewards, and process social rules.

To test their idea, the research team designed two different tasks for groups of 58 participants. The first task required participants to act as instructors and help learners identify hidden numbers on digital cards. The instructor can choose to report the numbers honestly or lie to the learner.

The game was structured in such a way that lying allowed instructors to earn more money. This created a direct conflict between economic interests and honest behavior. While making these choices, participants lay inside a functional magnetic resonance imaging scanner. The machine uses powerful magnetic fields to track blood flow in the brain, revealing which areas are active at any given moment.

In the second task, the same participants observed another person playing the exact same card game. They were asked to rate other people’s decisions on a scale from extremely immoral to extremely moral. They completed this judgment task while their brain activity was monitored with a scanner.

Scientists used statistical models to calculate exactly how much each person values profits compared to honesty. The results showed that there was a clear gap between the two tasks. When participants made their own choices, they were significantly influenced by the potential for economic benefit. When evaluating others, they based their judgments strictly on whether the person they observed was honest.

Brain scans reveal physical differences between people who have a consistent moral view and those who don’t. The researchers looked at specific patterns of brain activity, not just the overall brightness of the brain scans. In morally consistent people, the ventromedial prefrontal cortex showed similar patterns of activity during both action and judgment tasks.

For morally inconsistent people, activity patterns did not match in the two situations. The ventromedial prefrontal cortex typically communicates with other brain regions that process rewards and ethical rules. In hypocritical participants, connections between this brain region and other regions were weaker during behavioral tasks.

Your brain simply wasn’t putting together the necessary information. This lack of connection means that the hypocritical person is likely to have a complete understanding of the rules of right and wrong. They just fail to apply those concepts to their own choices. “People who exhibit moral conflict are not necessarily blind to their own moral principles; they are simply biologically incapable of considering them and applying them to their moral behavior,” Chan says.

Next, the research team wanted to see if changing the activity in this brain region would change a person’s behavior. They recruited a new group of 52 participants for a second experiment. This time, they used a non-invasive technique called transcranial temporal interference stimulation to send specific electrical frequencies deep into the brain.

This technique involves placing electrodes on the scalp to send high-frequency electrical current to the head. These currents are too fast to affect the surface of the brain. When the electrical currents cross deep within the tissues, they create slower waves that change the way certain brain cells communicate.

Half of the participants received actual stimulation targeting the ventromedial prefrontal cortex. The other half were given a sham treatment known as sham stimulation. After the procedure, all participants completed the same card game and judgment practice.

People who received real brain stimulation showed a larger gap between their actions and judgments. Researchers were able to make people more hypocritical by interfering with the normal functioning of brain regions. This proved that the ventromedial prefrontal cortex directly controls moral consistency.

These results suggest that moral consistency is not an automatically acquired property. This is a biological process that relies on the brain’s ability to synchronize different types of information. “Our findings suggest that moral consistency should be treated like a skill that can be strengthened through intentional decision-making,” said lead author Hongwen Song from the University of Science and Technology of China.

This study has some limitations. The researchers only considered specific scenarios involving Chinese adults’ financial interests and integrity. Different cultures may handle moral dilemmas in very different ways.

The scenarios also focused entirely on the perspective of the decision maker and the outside observer. The study did not measure how these behaviors affect the person being lied to. Adopting the victim’s perspective may change the way your brain evaluates the situation.

Lack of moral consistency may also reflect intentional opportunistic strategies rather than unconscious cognitive biases. Some people may have high standards publicly to maintain their image, but secretly engage in bad behavior for personal gain. Future research will attempt to disentangle these specific personality traits from general brain network activity.

The authors note that understanding these brain networks could ultimately help educators design better ways to teach ethical reasoning. Recognizing the biological limits of moral integration could also help programmers develop artificial intelligence systems that make consistent ethical choices.

The study, “Moral disagreement is based on insufficient representation of vmPFC tasks and connections,” was authored by Valley Liu, Zhuo Kong, Jiaxin Fu, Lihao Zheng, Isaac Wang, Min Wang, Yifei Du, Lin Zuo, Bensheng Qiu, Chongyi Zhong, Lusha Zhu, Zhen Yuan, Xiaochu Zhang, and Hongwen Song.